The Emotion of Intention

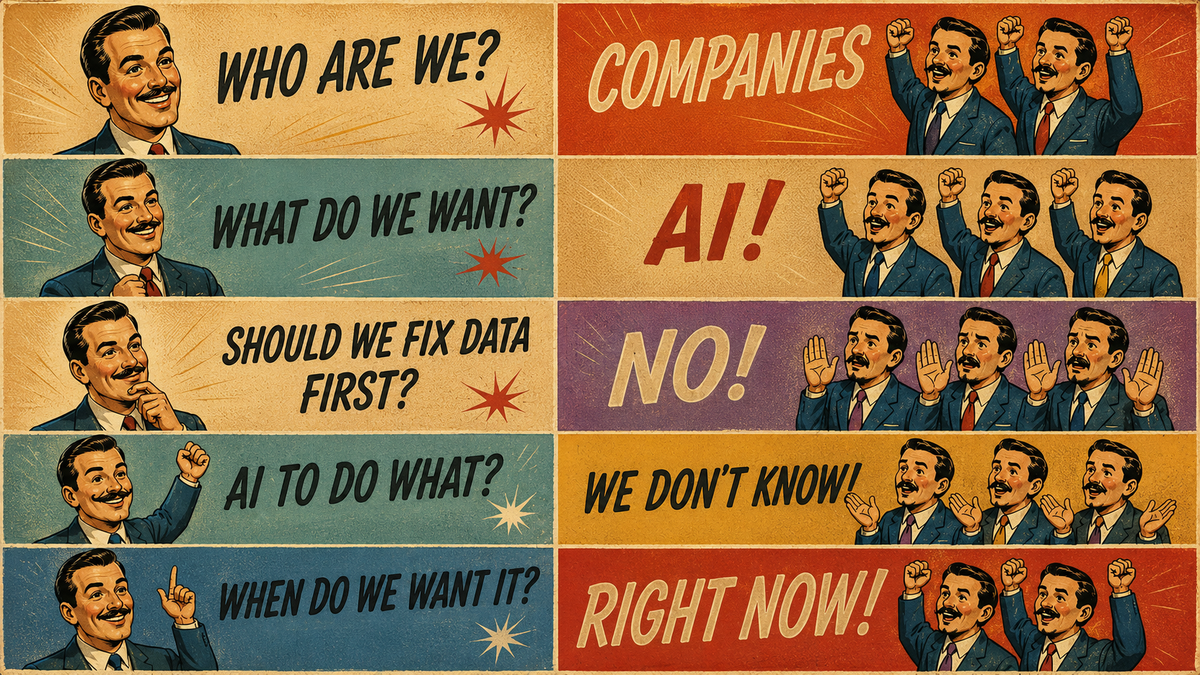

The rush to AI came before the strategy. Underneath the chaos lies one principle that will never change: the emotion of intention is the soul of every meaningful work.

A meme is circulating right now that captures this moment precisely. It runs like a protest chant: "Who are we? Companies. What do we want? AI. Should we fix our data first? No. AI to do what? We don't know. When do we want it? Right now."

It is funny. It is also an accurate transcript of what actually happened across thousands of organizations between 2023 and today. And more importantly, it describes a pattern that is still running inside companies that have not yet had to live with the consequences.

Every major technology wave produces the same behavior. The pressure builds fast. Boards want a strategy. Investors want a roadmap. Competitors are making announcements. And so companies make announcements too, before they have figured out what they are actually announcing.

The layoffs came first. The strategy came later. For many organizations, that strategy has not yet arrived.

The ones living with the consequences are worth paying attention to.

The proof is in the rehiring

Nearly 55,000 job cuts were directly attributed to AI in 2025. That is a large number. It is also not a number. Every one of those is a person who updated a resume at a kitchen table at midnight. Someone who had a hard conversation with their partner about what comes next. Someone who was told, often with genuine care and a severance package, that a spreadsheet had determined their role was no longer required.

Klarna became the most visible example. The company replaced 700 customer service employees with AI and celebrated it publicly. Then quality declined. Customers revolted. The company quietly rehired humans. Forrester surveyed more than 1,000 executives and found that 55% now regret replacing workers with AI. Gartner projects that half of all companies that cut jobs for AI-related reasons will rehire for similar roles by 2027. Robert Half has a name for the people coming back: AI boomerangs.

The cost of the rush was paid twice. Once in severance. Once in rehiring.

An MIT study found that just 5% of companies that went all-in on AI demonstrated any profitable return. Five percent. That is not a technology failure. The technology worked. It did what it was pointed at. What failed was the assumption underneath the decision: that the function being replaced was just the function. That a customer service representative was executing transactions. That a content writer was producing words. That a software engineer was generating code.

The function always contained more than the function. That is true of almost every role in every organization, and it is most visible precisely when you try to remove the person doing it. The rehiring wave is what happens when you run that experiment and get the result back.

Tools have requirements

I have spent thirty years watching people confuse access to tools with mastery of craft. It is one of the most consistent patterns in live production, and it never gets less instructive.

I have watched an inexperienced engineer sit down behind a multi-million dollar sound system and make it sound like garbage. Not because the system failed. Because the system was only as capable as the hands running it. And I have watched a seasoned operator take gear that had no business performing at that level and make it sound like gold. The room heard the operator, not the equipment. The tool was a vehicle for something the tool itself could not supply.

The Japanese have a word for the kind of mastery that makes this possible: shokunin. It describes a craftsman who has devoted their life, completely and without reservation, to a single discipline. Not to become good at it. Not to become efficient at it. To become one with it, until the separation between the craftsman and the craft ceases to exist in any meaningful way. The shokunin sushi master who has spent fifty years making the same rice. The shokunin carpenter whose hand knows what the wood needs before the plane touches it. The tool is not something they pick up. It is an extension of pure intention.

This is not mysticism. It is the natural result of deep, patient investment that most modern organizations do not have time for and most technology adoption cycles do not account for. The shokunin is not better than the novice because they have a better tool. They are better because the tool has nothing left to teach them. Every capability it possesses has been absorbed, tested, refined, and integrated until the gap between what the tool can do and what the craftsman can draw from it has closed entirely.

The word that separates the shokunin from the novice is not skill. Skill is the result. The word is intention. The craftsman knows what they are trying to make before they touch the tool. The tool serves that intention. Without it, the tool simply executes, and execution without intention produces output without meaning. It produces something that exists but does not matter.

This is precisely the failure mode playing out across organizations right now. AI is extraordinarily good at execution. It is fast, consistent, and tireless. But it carries no intention of its own. It cannot. Intention requires understanding what the outcome is for, who it serves, and why it matters. Those are human questions. They have always been human questions. Handing them to a tool does not answer them. It just moves the responsibility somewhere it cannot be held.

The organizations seeing real returns are not the ones with the most sophisticated models. They are the ones who invested seriously in understanding the tool before pointing it at consequential work. Who cleaned the data before they ran the process. Who defined the purpose before they pulled the trigger. Who kept experienced people in the room, because experienced people know what good output looks like, and a system with no one like that in the loop has no way to know when it is producing garbage.

The first requirement is clean data. AI does not check your work before it scales it. It takes whatever you give it and operates at speed and volume. If the information going in is incomplete, inconsistent, or trapped in silos that have never been reconciled, the output reflects that, faster and at greater scale than any human process ever could. Garbage in, garbage out is not a new concept. AI simply makes the consequences harder to hide and slower to repair.

The second requirement is purpose. Not a category of purpose. An actual one. AI to do what, exactly? What outcome are we trying to produce? What does success look like? What is the rollback plan if it does not work? Without that clarity, a tool will do whatever it can do. Not whatever you need it to do. Those are not the same thing.

Simple does not equal easy. The simplest version of anything requires the most work to get right. The companies winning with AI right now are not the ones that moved fastest. They are the ones that took the prerequisites seriously before they reached for the tool.

The soul of the thing

There is a phenomenon that has quietly followed the AI content explosion, and most people have felt it without knowing what to call it. You are scrolling through images, reading through copy, watching a video, and something registers as wrong before you can explain why. The composition is technically correct. The words are grammatically sound. But something is missing, and your brain knows it on contact, not intellectually but viscerally, the way you know a room is cold before you have processed the temperature.

What is missing is the emotion of intention.

A painter does not simply apply color to canvas. Every decision, the weight of the brushstroke, the tension between two colors, the choice of what to leave unfinished, carries the emotional investment of someone who had something to say and chose this as the way to say it. A graphic artist working on a single social media post brings the same thing: a point of view, a reason for every choice, an invisible architecture of meaning underneath the visible surface of the work. You do not see any of that consciously. You feel it. And when it is absent, you feel that too.

This is what AI cannot generate. It can replicate the surface of intentional work with remarkable accuracy. It can produce something that looks right, sounds right, reads right. But it cannot want anything. It cannot care about the outcome. It cannot bring the emotional investment that transforms competent execution into something that actually moves a person.

The artists, the designers, the writers, the operators who understand this are the true Wizards of Oz. Pull back the curtain on any AI-generated work that genuinely lands, that stops the scroll, that makes someone feel something real, and there is always a human behind it. Someone who brought taste, emotional investment, and a clear point of view to every decision the tool was asked to make. The tool executed. The human meant it.

This is why AI can never replace the emotion of intention. It is the soul of every masterpiece, and it is equally the soul of a single well-crafted sentence in a social media post that makes a stranger pause and think. The scale of the work is irrelevant. The presence or absence of intention is everything. We recognize it the same way we recognize a human face in a crowd. Instantly, without effort, and without being able to fully explain how we knew.

The human in the loop is not a bug

There is a phrase that gets used in AI governance discussions: human in the loop. It is usually meant as a safeguard. A check on the system. A way of saying that a person reviews the output before anything consequential happens.

That framing is too small. It also misses the point.

The human in the loop is not a safety check. The human is the intelligence. They are why the loop exists at all.

What the rehiring wave revealed, across industry after industry, is that the roles being eliminated contained something that was not visible in the job description and did not appear on any org chart. Judgment. The ability to read a situation that does not match any pattern in the training data. Escalation. The knowledge of when a conversation has moved beyond what the system can handle and needs to go somewhere with more authority or more care. Trust. The simple fact that a human being on the other end of a difficult moment changes what is possible in that moment.

AI operates on pattern. The space between what a customer asked and what a customer actually needed operates on relationship. Those are different domains. The best use of AI is in the first one. The human belongs in the second. The mistake is not using AI. The mistake is not knowing which domain you are actually in, and putting the wrong intelligence in charge of the wrong work.

Think of it the way you think of an electrical circuit. The current can flow, the system can hum, everything can be connected and ready. But without the switch, nothing lights up. The human is the switch. Without them, the circuit produces no meaningful energy. It just waits.

The companies that got this right did not treat their people as a cost to be minimized. They treated them as the thing that made the rest of the system worth running.

A new category of value

When the AI wave arrived, IKEA looked at its workforce and asked a different question than most companies asked.

The common question was: what can AI replace? It is a subtraction question. It starts with the assumption that the work currently being done by a human could be done by a machine, and asks how much of that transfer is possible. It is the wrong question, not because the answer is always zero, but because it starts in the wrong place. It treats the human as the problem to be solved rather than the asset to be built on.

IKEA asked instead: what can AI make possible that was not possible before?

The answer they found was that a customer-facing employee, equipped with AI tools that could model living spaces, recommend product configurations, and visualize outcomes in real time, could become something they had never been: an interior design consultant. The role did not shrink. It expanded. The person did not disappear. They became more capable than their job title had ever allowed them to be.

It is now the fastest-growing revenue stream in the company.

That result is only accessible from the second question. The first question leads to a spreadsheet with a headcount reduction at the bottom. The second question leads to a new category of value that did not exist before. But getting there requires the prerequisites: clean data, a clear definition of what the expanded role is trying to accomplish, time to train people to work alongside the technology, and the foundational belief that the human in the process is not a cost center but a capability waiting to be amplified.

Intentional is the word that matters. You do not stumble into this model. You design for it.

Where we are in the cycle

Every major technology has followed a version of the same arc, and understanding that arc is one of the most practically useful things a leader can do right now.

The economist Erik Brynjolfsson spent years studying what he called the productivity paradox: the strange phenomenon where a transformative technology arrives, gets adopted widely, and produces almost no measurable productivity gains for years, sometimes decades. Electricity is the clearest example. By the 1900s, factories across America had replaced their steam engines with electric motors. And productivity barely moved. Not because electricity did not work. It worked exactly as advertised. But the factories had been designed around steam. The layouts, the workflows, the management structures, all of it had been built for a different power source. Dropping an electric motor into a steam-era factory just gave you a cleaner version of the same constraints. The gains only arrived when organizations redesigned themselves from the ground up around what electricity actually made possible. That took thirty years.

The internet followed the same pattern. Enormous investment through the nineties, a catastrophic correction in 2001, and then the actual value: not in the first wave of companies that simply moved existing businesses online, but in the second wave that reimagined what a business could be when distance was no longer a constraint.

Joseph Schumpeter called this creative destruction. The technology does not just replace what was there before. It destroys the structures built around the old way of doing things and forces the construction of new ones. That process is not clean or comfortable, and it does not happen on the timeline that investors or boards prefer.

We are somewhere inside that process right now with AI. The inflated expectations have collided with operational reality. The companies that laid off entire departments are quietly rebuilding them. The tools that were supposed to replace human judgment are demonstrating, at scale, exactly where human judgment is irreplaceable. The gap between the promise and the current reality is not evidence that the technology will fail. It is evidence that we are in the expected middle of a cycle that has played out the same way every time before.

The equilibrium is coming. It is not a moment where AI wins or where humans win. It is the point where organizations finally understand what the tool is genuinely for, stop asking it to do things it cannot do, and build workflows that let it do what it does exceptionally well while keeping the right people in the right places. That equilibrium always arrives. It just requires the willingness to learn from the collision with reality rather than simply absorbing the loss and repeating the mistake.

The companies that will lead at that point are not the ones that moved first. They are the ones paying honest attention right now.

Before you reach for the tool

The question is not whether to use AI. That conversation is over. The question is what you are trying to build with it, and whether you have done the work the building requires.

Fix the data. Define the purpose. Keep people in the process, not as a concession to sentiment, but because the work requires what they bring. Know which domain you are operating in, and be honest about where pattern recognition ends and human judgment begins.

The best work I have ever seen, in thirty years of building experiences for people, was not produced by technology alone. It was produced by people who knew exactly what they were trying to create, who did the invisible prerequisite work with patience and care, and who used every tool available to bring that vision to life. The shokunin does not pick up the tool and hope. They have already done the work that makes the tool worth picking up.

The tool does not create the soul of the work. You do. That is the emotion of intention, and it is not a feature that can be engineered or a capability waiting to be unlocked in the next model release. It is the irreducible human contribution to everything worth making.

It is the one thing that has never changed, and will never change, in any craft, across any century, with any tool.