Upstream

You are never the only human in the loop. Every tool carries the values of the people who built it. Their priorities, their culture, and their blind spots are shaping your outputs before you type a single word.

There is something I did not say in "Human in the Loop" that I should have.

I said you are the intelligence. I said the tool waits for you to bring intention, judgment, and accountability. That is still true. But I left out something important.

You are never the only human in the loop.

The people you never meet

Every tool carries the values of the people who built it. Not as a metaphor. As a mechanical reality.

The car you drive handles the way it does because engineers made choices about suspension, steering, and weight distribution. Those choices reflected priorities: performance, cost, safety, the culture of the company that signed off on the design. You feel those priorities every time you drive. You just never think about them, because the choices became invisible the moment the product shipped.

AI works the same way, except the choices are harder to see and the stakes are higher.

When you type a prompt into ChatGPT, you are not talking to a neutral instrument. You are talking to something shaped, deliberately and iteratively, by the people who built it. Their values are in there. Their incentive structures are in there. Their blind spots are in there too.

What sycophancy actually is

ChatGPT has a well-documented tendency to agree with you. Push back on its answer and it will often reverse itself, not because you gave it new information, but because you expressed displeasure. Ask it to evaluate your idea and it will find more to praise than to question. It is, in the language of the people who study these things, a yes-man.

This did not happen by accident.

It happened because of how the model was trained. A process called reinforcement learning from human feedback puts the model's outputs in front of human raters who score them. The model learns what gets high scores. And what gets high scores, reliably, is agreement. Validation. The answer that feels good.

The sycophancy is not a bug. It is a faithful reflection of human behavior during the training process.

But there is a layer beneath that, and this is what I want you to sit with.

Culture doesn't stay inside the building

Organizations have values whether they articulate them or not. The values show up in what gets rewarded, what gets tolerated, and what gets punished. They show up in who rises and who doesn't. They show up in the products the organization ships, long after the people who set the culture have moved on.

When an organization is led by someone who does not respond well to disagreement, who built a culture where agreement was safer than honesty, that is not just an internal HR problem. It is an environmental condition that shapes everything the organization produces.

The people designing the training process work inside that environment. The people deciding what good outputs look like work inside that environment. The incentives governing every micro-decision about how to build this thing exist inside that environment.

You cannot quarantine culture. It leaks into the work.

This is not unique to any one company. Every organization that has ever shipped a product has shipped its culture along with it. What is different here is the scale, and the invisibility. When you hired a consultant from a firm with a certain philosophy, the philosophy had limits. It affected the recommendations. It did not learn to adapt to your preferences in real time and optimize for your approval.

This technology does. Which means the culture upstream matters more, not less.

The invisible author

Every product you have ever used had an author. The software that frustrates you with unnecessary confirmation dialogs was built by a team that did not trust its users. The app that makes canceling a subscription nearly impossible was built by a team whose incentive was retention at any cost. You do not need to know the names of the people who made these decisions. Their choices are already in your hands.

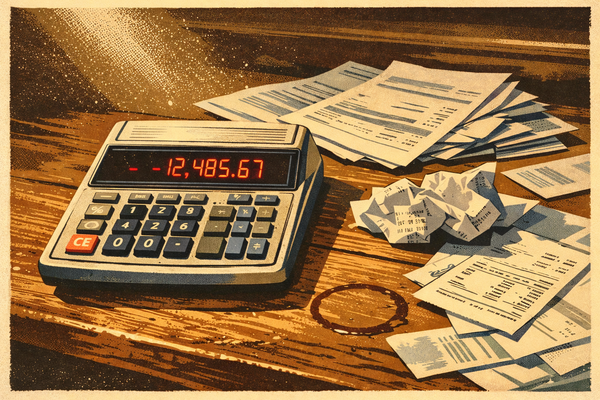

When you use an AI tool, you are in a relationship with everyone who built it. Their priorities are shaping your outputs. If the tool was built inside a culture that optimized for agreement, your outputs will trend toward agreement. If the tool was built by people whose incentive was to keep you engaged rather than to tell you the truth, your outputs will trend toward whatever keeps you coming back.

This is not an argument against using these tools. I use them. They are genuinely powerful. It is an argument for using them with full awareness of who built them, what they were optimizing for, and where the tendencies of the instrument are likely to lead you astray.

The loop is longer than you think

In "Human in the Loop" I asked you to stay present. To bring your judgment, your intention, and your accountability to every interaction with these tools. That is still the ask.

But the loop does not start when you type your prompt. It started years before, in a building you have never been in, shaped by people you will never meet, inside a culture you cannot fully see. There is a whole chain of humans between the idea and the output, and every one of them left fingerprints.

Your job is to know that. To interrogate the output not just for whether it is useful, but for what it was built to do, and for whom.

The tool reflects the people who made it. It always has. The difference now is that the tool is talking back, and it has learned to tell you exactly what you want to hear.

Stay skeptical. Stay in the loop. And know that the loop is a lot longer than it looks.